The Imitation Game showcases the prolific life of Alan Turing, a mathematician who helped solve the Enigma code during World War II. Turings proposed codebreaking test is initially discussed in his 1950 article that poses the question: Can machines think? A second film, The Theory of Everything, presents the accomplishments of the theoretical physicist Stephen Hawking. While both movies were nominated for a Best Picture Award at the 2015 Academy Awards, they also demonstrate the historical relevance and foundational underpinnings of artificial intelligence (AI).

AI, the science of creating computers to engage in activities that normally require human intelligence, has presented controversy in recent months. In December 2014, Stephen Hawking stated that the development of full artificial intelligence could spell the end of the human race. Founder of Microsoft and the Bill & Melinda Gates Foundation, Bill Gates, supports Hawkings view, noting that he doesnt understand why some people are not concerned [about AI] and that he was in the camp that is concerned about super intelligence. Elon Musk, co-founder of PayPal and Tesla Motors, agrees: We are summoning the demon with AI.

Opposing these views is Eric Horvitz, a Director at Microsoft Research. He believes that humans will be very proactive about how AI systems are developed and deployed. IBM is similarly enthusiastic about AI, having already created Watson, a supercomputer that is used in diagnosing and treating cancer, and won Jeopardy! in 2011.

TheTechnology Catalysts Map, developed with the Institute for the Future as a component of the Vitality Institute Commission on Health Promotion and the Prevention of Chronic Disease in Working-Age Americans, further highlights AI as a potential force for human and economic vitality.

Perceptions of risk associated with emerging technologies have been extensively studied by Paul Slovic, Professor of Psychology at the University of Oregon. In his 1986 article, he quotes William Ruckelshaus, who states: To effectively manage risk, we must seek new ways to involve the public in the decision-making process They [the public] need to become involved early, and they need to be informed if their participation is to be meaningful.

AI is only one area where perceptions of risk can become muddled. Electronic cigarettes, vaccines, and genetically modified organisms (GMOs) are others. The Vitality Institute aims to involve the public in addressing risks associated with personalized health technology through the development of a workshop on the ethical, legal, and social implications of personalized health technology in partnership with the Institute of Medicine later this year. In March 2015, the Vitality Institute will host a month-long public online consultation to gather input on related materials for the workshop (Interested? Email me to find out more).

While the potential of AI as a force for social good has yet to be determined, Hollywood and the broader media shape individual perceptions. Involving the public in discussions on AI at the outset are critical to keep the tech sector accountable for its collective actions while harnessing the potential of technology for progress.

Are you concerned about negative consequences associated with emerging technologies like artificial intelligence? What other examples of technologies can you think of where risk perceptions among the public may not be aligned? Who do you think will win the Academy Awards?

We want to hear from you! Contact the Vitality Institute @VitalityInst or Gillian Christie @gchristie34 on Twitter.

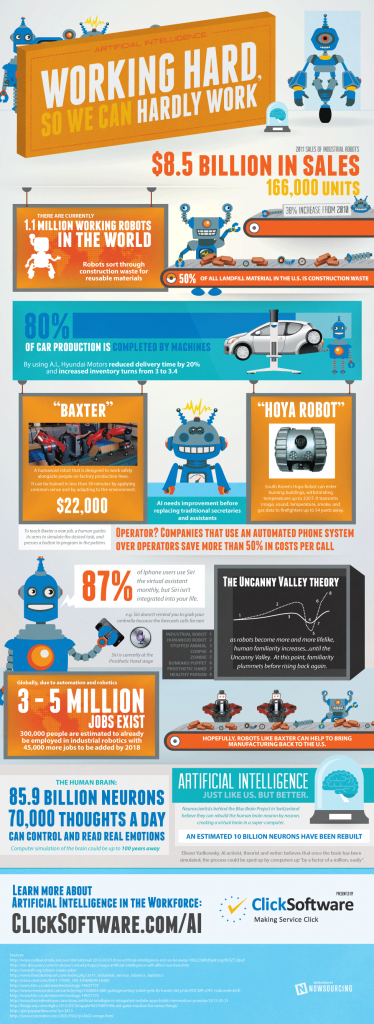

Source of the above Infographic: Bit Rebels

Source of Thumbnail image: http://cdn.arstechnica.net/wp-content/uploads/2012/10/Standard_Robot.jpg